Elegant Rust with proc macros

When writing immediate mode (egui) applications it comes to me quickly that nigh all logic computations should be done off the UI thread. There are many ways to approach it, however as a fan of event-based systems sooner or later I implement some kind of event handling. The pattern is almost the same with minor differences and looks like this:

#[derive(Clone)] pub struct ProcessorConfig { pub id: String }

#[derive(Clone)] pub struct TaskResult { pub success: bool }

pub struct State { pub active_tasks: Vec<u32> }

#[derive(Clone)]

pub enum Event {

ProcessorStart { config: ProcessorConfig },

ProcessorStop,

LongRunningTask { id: u32 },

LongRunningTaskComplete { task_result: TaskResult },

}

struct EventHandler {

state: Arc<RwLock<State>>,

tx: tokio::mpsc::Sender<Event>,

}

impl EventHandler {

fn handle(&self, event: Event, event_queue: &mut VecDeque<Event>) {

use Event::*;

match event {

ProcessorStart { .. } => self.process_processor_start(event, event_queue),

ProcessorStop => self.process_processor_stop(event, event_queue),

LongRunningTask { .. } => self.process_long_running_task(event, event_queue),

LongRunningTaskComplete { .. } => self.process_long_running_task_complete(event, event_queue),

}

}

fn process_processor_start(&self, event: Event, _event_queue: &mut VecDeque<Event>) {

let Event::ProcessorStart { config } = event else {

return;

};

println!("Starting processor with config: {}", config.id);

// ...

}

fn process_processor_stop(&self, event: Event, _event_queue: &mut VecDeque<Event>) {

let Event::ProcessorStop = event else {

return;

};

println!("Stopping processor...");

// ...

}

fn process_long_running_task(&self, event: Event, _event_queue: &mut VecDeque<Event>) {

let Event::LongRunningTask { id } = event else {

return;

};

println!("Starting task {}", id);

if let Ok(mut state) = self.state.write() {

state.active_tasks.push(id);

}

}

fn process_long_running_task_complete(&self, event: Event, _event_queue: &mut VecDeque<Event>) {

let Event::LongRunningTaskComplete { task_result } = event else {

return;

};

println!("Task completed. Success: {}", task_result.success);

if let Ok(mut state) = self.state.write() {

state.active_tasks.clear();

}

}

…

Design rationale

Code above has some design decisions that were developed over the years and it has specific needs “baked in” as a result. E.g.:

-

Serialization/deserialization and clone for

Eventis very important. I want to save stream to be able to: recreate the state, re-run event, create an event log etc. Starting with serialization and clone requirement makes sure that all events have adequate shape. -

Arc<RwLock<State>>allows to defer processing to background threads and make a callback with state modification. It also keepsSender<Event>for interacting with event system. -

&mut VecDeque<Event>is an event pushback, it works with following loop pattern:loop { let first = rx.recv().await?; queue.push_back(first); while let Ok(ev) = rx.try_recv() { queue.push_back(ev); } while let Some(ev) = queue.pop_front() { self.handle(ev, &mut queue).await; } }Doing

self.tx.clone().send(...)in the same thread could end up deadlocking receiver and the whole application. To avoid deadlock same-thread processor can send event directly to queue instead. Another feature coming from the pattern is also an in-place event transformations. For example it allows for upgrading events without changing processing order (byevent_queue.push_frontduring processing logic).

I’ll note that in example I didn’t use Envelope pattern - even though in final code I do. In a nutshell: Envelope replaces Event with a wrapper-like structure, i.e. Envelope { event: Event, ...}. Extra data can be included in envelope, a data that doesn’t impact on processing but provides useful information. Such data can be, for example, a tracing::Span (for telemetry it is almost required) or (in distributed systems) origin system, timestamps, correlation etc. Envelopes should be processed in a same way by processors and they add to boilerplate. EventEnvelope processor could like like this:

#[tracing::instrument(skip_all, parent: &envelope.span)]

fn process_some_task(&self, envelope: EventEnvelope, event_queue: &mut VecDeque<EventEnvelope>) {

CustomCounter::count_origin(envelope.origin);

let Event::SomeTask { value } = envelope.event else { return ; };

// ...

}

A Perfect World

After implementing same thing thrice I was ready to make a macro for it. I love macros, even though I understand that they shadow the code and add to complexity. This is the same code as the initial example, but in proc-macro version.

#[derive(Clone)] pub struct ProcessorConfig { pub id: String }

#[derive(Clone)] pub struct TaskResult { pub success: bool }

pub struct State { pub active_tasks: Vec<u32> }

struct EventHandler {

state: Arc<RwLock<State>>,

tx: tokio::mpsc::Sender<Event>,

}

#[event_macros::event_processor]

impl EventHandler {

#[handler(ProcessorStart)]

fn process_processor_start(&self, config: ProcessorConfig) {

println!("Starting processor with config: {}", config.id);

// ...

}

#[handler(ProcessorStop)]

fn process_processor_stop(&self) {

println!("Stopping processor...");

// ...

}

#[handler(LongRunningTask)]

fn process_long_running_task(&self, id: u32) {

println!("Starting task {}", id);

if let Ok(mut state) = self.state.write() {

state.active_tasks.push(id);

}

}

#[handler(LongRunningTaskComplete)]

fn process_long_running_task_complete(&self, task_result: TaskResult) {

println!("Task completed. Success: {}", task_result.success);

if let Ok(mut state) = self.state.write() {

state.active_tasks.clear();

}

}

}

I really, really like it. Transformed code from that example is the same as the initial code.

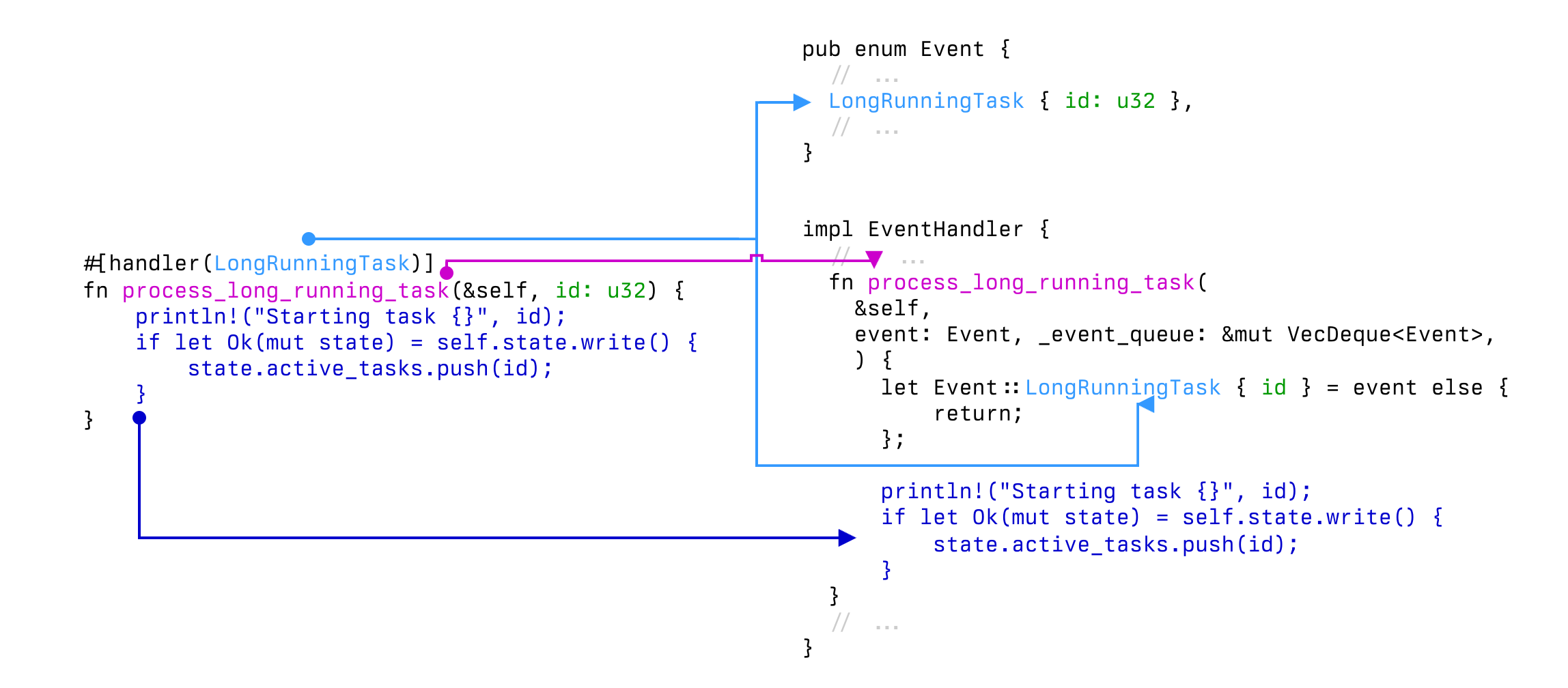

What transformations are doing?

On the top there’s:

#[event_macros::event_processor]

impl EventHandler {

// ...

}

That’s an actual entry point in the macro, it gathers all the information needed and from that it outputs:

- Full

pub enum Event { ... }definition run(&self, event: Event, event_queue: &mut VecDeque<Event>)implementation (listen, add to queue, handle from queue)impl Debug for Event(for debugging purpose it’s helpful to know the name of current event)- transformed

fn process_ ...functions

Visualized, this is how transformation happen:

Why proc-macro at all

If I’d try hard enough I could (probably) achieve similar result with macro_rules! macro. And I tried!

There is however one thing that I really hate when working with such macros: rust-analyzer and rust-fmt giving up on everything. I don’t get inspection, I don’t get completion, I don’t get formatting. For simple macros that’s fine - I can live with a couple non-completed arguments. Yet, with event-driven architecture, the core logic actually lives in handlers, so I didn’t want to be deprived of these helpers.

When switching to proc-macros an interesting part is that processor functions are perfectly valid ones! rust-analyzer sees input and output types. I also do some magic (that I don’t like but hey…): when EventEnvelope or VecDeque<EventEnvelope> is in function inputs I forward the variable name to body processing, i.e.

#[handler(WithEnvelope)]

fn process_with_envelope(&self, my_envelope: EventEnvelope) { ... }

becomes:

fn process_with_envelope(&self, env: EventEnvelope, event_queue: &mut VecDeque<EventEnvelope>) {

let my_envelope = env;

// ... rest of the code

}

Production code

You can see implementation of (macroized) code at one of my open source projects.

There are however, some notable differences.

-

As I mentioned before: I’m using

EventEnvelopeandtracingfor telemetry, so the actual code is slightly more complex:fn process_my_event( &self, env: EventEnvelope, queue: &mut ::std::collections::VecDeque<EventEnvelope>, ) { let Event::MyEvent { item, value } = env.event else { unreachable!(); }; { println!("Hello from MyEvent"); } } -

handleandrunfunctions are asynchronous (because my spawned processor is asynchronous one)

…implementation?

It’s here. I won’t go into implementation itself, but I need to say that TODAY it’s easier than it was some time ago.

LLMs are quite capable in generating proc-macro code given simple instructions, and once I grasped the main concept it was actually quite easy (especially using proc-macro, proc-macro2 and syn crates). In case you wonder, the most important pieces are:

-

proc-macrois the only crate that can produce proc-macros and it must produceproc_macro::TokenStreamas output -

proc-macro2provides many utilities, but outputsproc_macro2::TokenStream- incompatible, but easily convertible to and fromproc_macro::TokenStream -

synis creating structs of actual code - things likeFnItemorVariantfor enums, you can construct them by hand -

synis usingproc-macro2for utilities -

code pieces can be generated using

quote!(orquote_spanned!if you want to hint user of what is actual source of errors) -

parse_quote!can be used to fill insynstructs, e.g.method.sig = parse_quote! { fn some_func(a: u32, b: u32) -> u32 };

Once I internalized, used some help from LLMs implementation was quite smooth.

At the end of the day

…I’m really happy with the result. Adding new Events was a chore, usually required at least for places to put the code in:

- call site

Eventenumhandlefunction- boilerplate heavy

processor_function

After the change it became only 2, much simplified and deprived of boiler plate.

I considered packing this into a crate (because I KNOW this pattern is good and reusable) but I have some doubts about it.

I don’t know if there’s any audience: would I use such crate? No idea. Maybe they exist. It’s also opinionated - using tracing and serde for serialization. Also it raw crates Event and EventEnvelope in the current namespace, which might be conflicting. Then I require async processing run to dispatch events to handle.

So maybe someday, but if anyone stumbles upon it, the proc-macro implementation is only 300 lines of code (excluding tests) and is pretty straightforward to modify. Maybe it’s enough?

Przemysław Alexander Kamiński

vel xlii vel exlee

Powered by hugo and hugo-theme-nostyleplease.